Is Claude Too Woke For War?

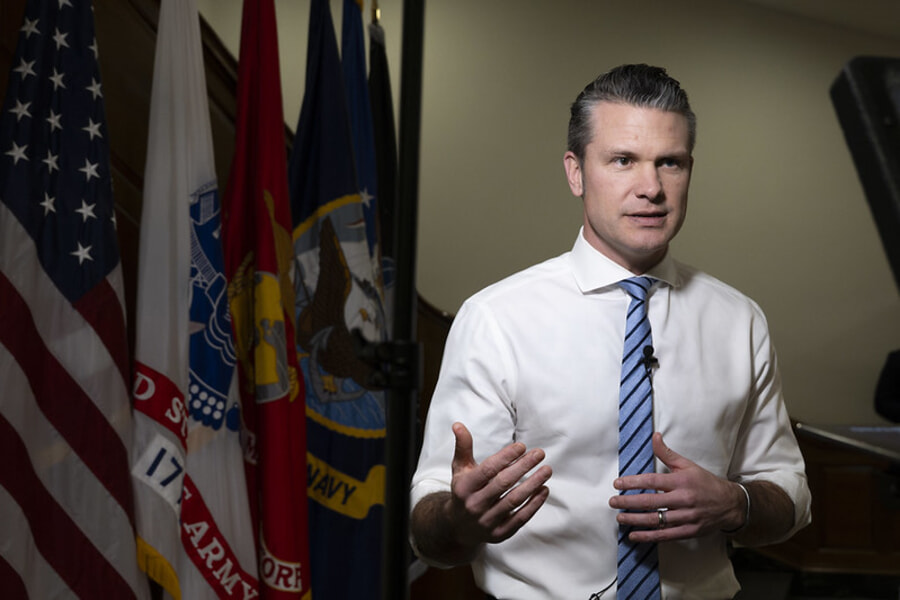

This week, U.S. Defense Secretary Pete Hegseth delivered an ultimatum to Anthropic that it allow unrestricted military use of its artificial intelligence (AI) models by Friday or face harsh punishments. This begs the question: When it comes to military use of AI, who exactly should be setting the rules?

At issue for the Department of Defense are safeguards intended to prevent accidental or malicious use of AI. The Pentagon argues that AI is no different from any other technology and decisions about how it is used should be left to the military.

In mid-January, Hegseth spoke about accelerating AI deployment within the Defense Department and eliminating barriers that prevent deploying the technology to the battlefield. Hegseth railed against “equitable AI, and other DEI and social justice infusions that constrain and confuse our employment of this technology …. We will not employ AI models that won’t allow you to fight wars.”

So far, Claude is the only model to have been approved for Defense Department classified work, although the Pentagon this week negotiated a deal for xAI’s Grok. Regarding Claude, one department official told Axios, “[W]e need them and we need them now,” because, “they are that good.” If Anthropic doesn’t cave, Hegseth has reportedly threatened to either force the company to remove its limits by invoking the Defense Production Act, or declare Anthropic a supply chain risk and freeze it out of the department’s supply chain. It’s like an abusive relationship: We must have you or no one can.

We have some sympathy with Hegseth’s position here, if not his methods. Claude’s constitution, known within Anthropic as its soul document, was published the week following Hegseth’s speech. Parts of it, such as prohibitions on harming others, are clearly not practical for the military.

However, Anthropic has stated that Claude’s constitution doesn’t apply to special-purpose models. When it comes to military uses, CEO Dario Amodei has specified only two hard red lines. He said models should not be used for the mass surveillance of Americans or in autonomous weapons that fire without a human in the loop. Anything else is fine.

That’s hardly transforming Claude into a social justice warrior singing Kumbaya around the campfire!

Regardless, there is a strong case that rules about military use of AI should be in the hands of Congress, rather than being decided in an argy-bargy between the secretary of defense and a private-sector CEO. The Pentagon’s chief technology officer, Emil Michael, acknowledged this when he framed Anthropic’s position as “undemocratic.”

Congress writes bills, the president signs them, and agencies comply, he said. “What we’re not going to do is let any one company dictate a new set of policies above and beyond what Congress has passed.”

This Lawfare article expands on arguments for congressional involvement. One of the more compelling ones is simply that Congress’s role is to make decisions when difficult trade-offs are involved.

We agree but would add that much of the disagreement between the Pentagon and Anthropic has arisen because they have different views of what Claude is. The Pentagon views it as a tool that should be available to deploy whenever and wherever it likes. Anthropic views it as an entity, like an idiot savant that can do amazing things but needs special training or guidance to help it work better. That’s what Claude’s constitution is for. It is incorporated into the training of mainline models to help them make better decisions.

The distinction between entity and tool becomes more important as models become more capable and are trusted with more important jobs. Military personnel are indoctrinated with military values, so a machine intended to replace human decision-making needs to understand those values too. That means that appropriate training for military models would not just be removing Claude’s constitution; it would involve replacing it with something else.

We have no idea what Claude’s “warrior ethos” will look like as it takes shape over time, but it will surely be more nuanced than any black-and-white legislation to be passed by Congress. This is a fast-moving space in which training methodologies and Defense Department uses will change quickly over time.

We think some hard-and-fast rules will be worth legislating, but Congress should also take a mentoring role. It needs to find transparency and oversight mechanisms that ensure both the Defense Department and companies are developing and using models in ways that reflect American values and support U.S. interests.

– Tom Uren writes Seriously Risky Business, a big-picture, policy-focused cyber security newsletter. Published courtesy of Lawfare.